Out of the Box AI Applications. Extensible by Design. One Interface.

Every application runs on your infrastructure, connected to your data, governed by your policies.

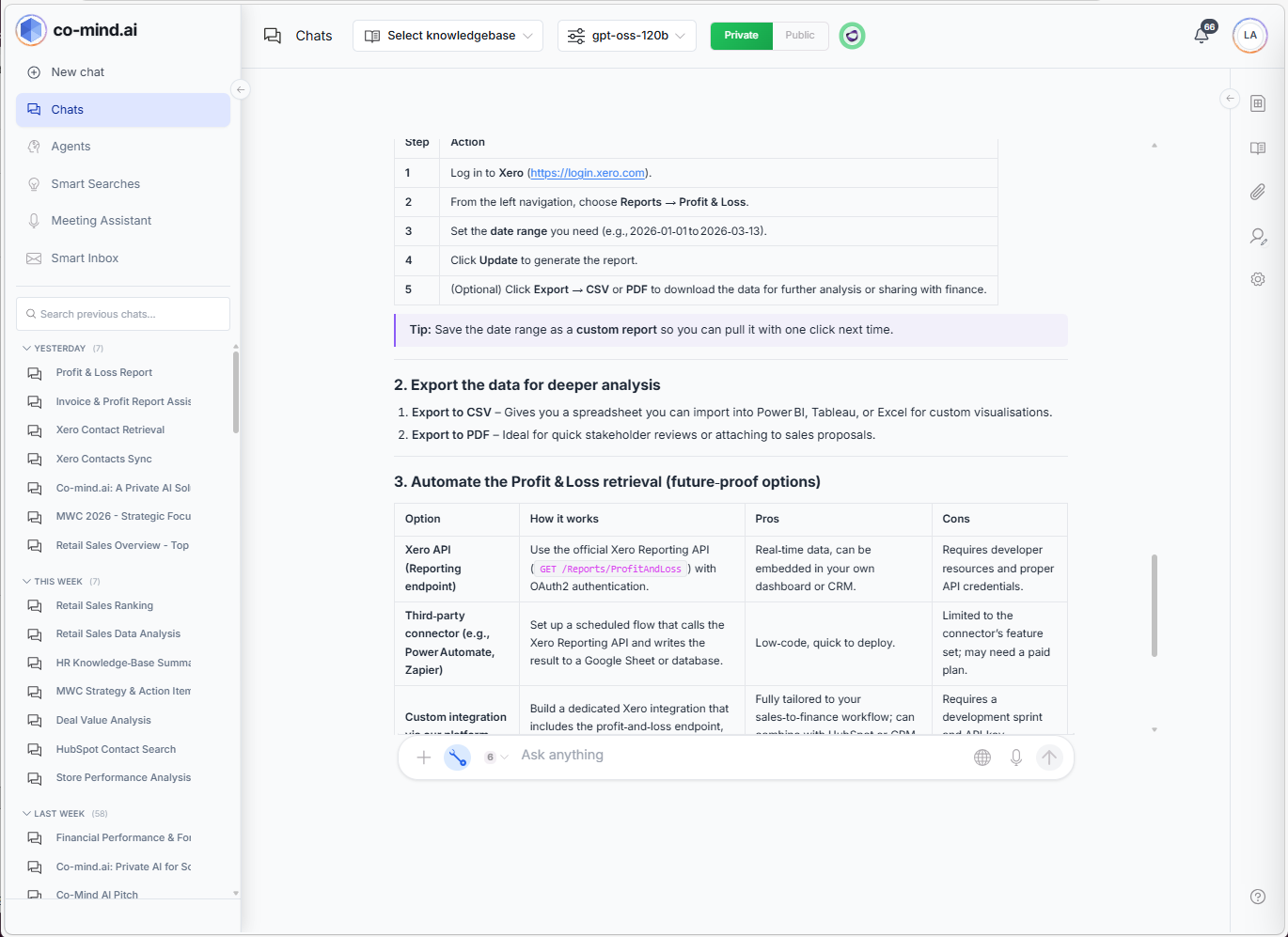

AI Chat & Knowledge Search

“Ask your data anything.”

Your private AI — connected to your actual knowledge bases. Hybrid search across every document you’ve indexed. Switch models per conversation.

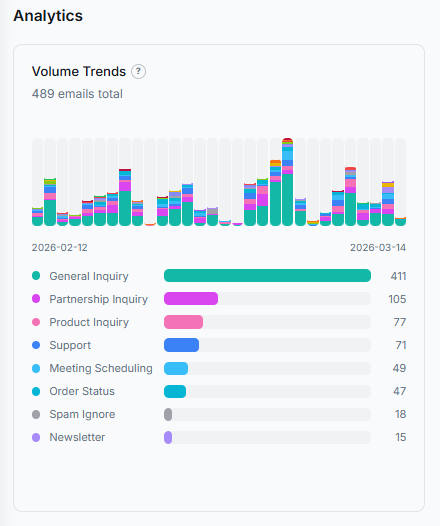

Smart Inbox

“Your inbox, sorted by AI.”

Full Microsoft Exchange integration with AI-powered categorization, semantic search, and smart reply generation in 5 professional tones. Cuts email time by 80%.

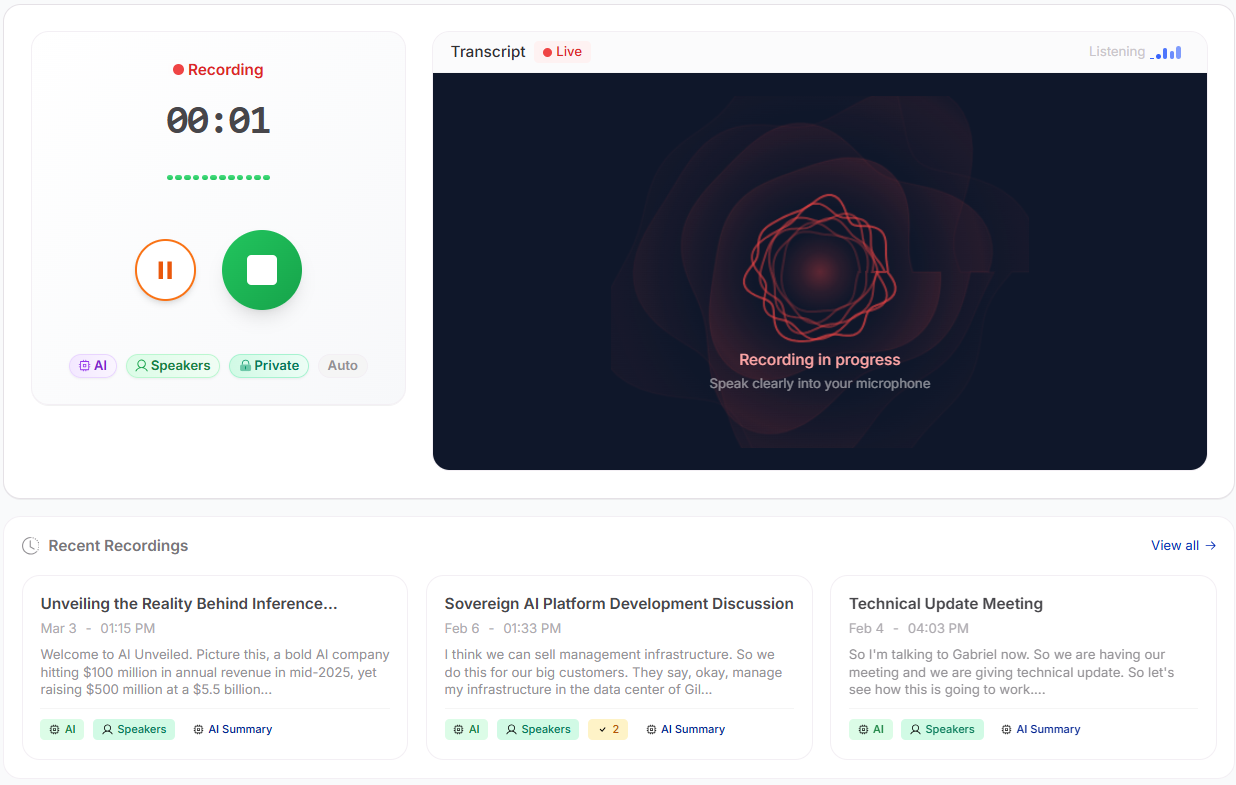

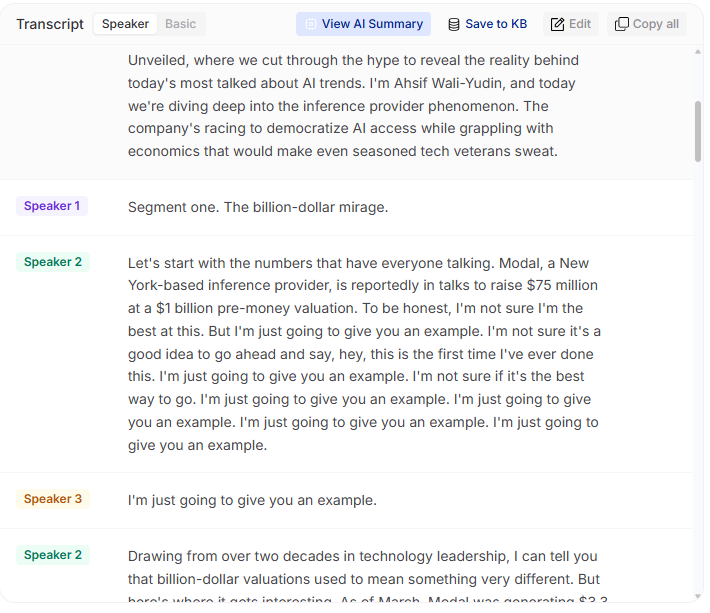

Meeting AI

“Every meeting. Summarized.”

Real-time transcription with speaker identification. AI-generated summaries, action items, and searchable transcripts. 100+ language auto-detection.

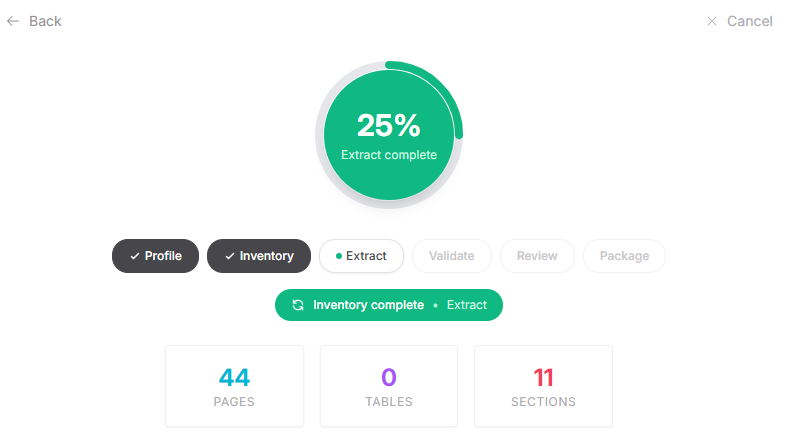

Document Analyzer

“Upload any document. Get structured data.”

Autonomous document analysis that discovers structure and extracts data — without predefined schemas. Contracts, invoices, financial statements. 97.9% table accuracy.

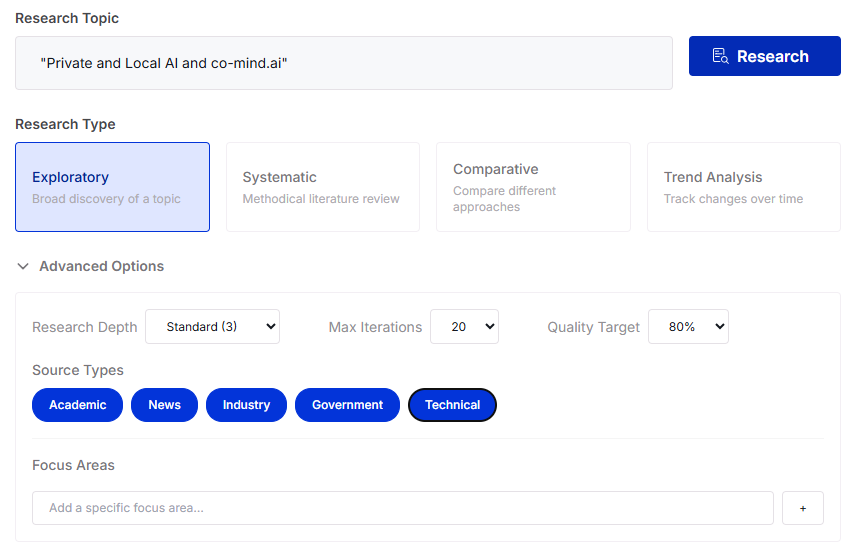

AI Researcher

“Deep research in seconds.”

Iterative research agent that searches the web, analyzes sources, detects bias, and synthesizes comprehensive reports automatically using THINK / ACT / OBSERVE reasoning loops.

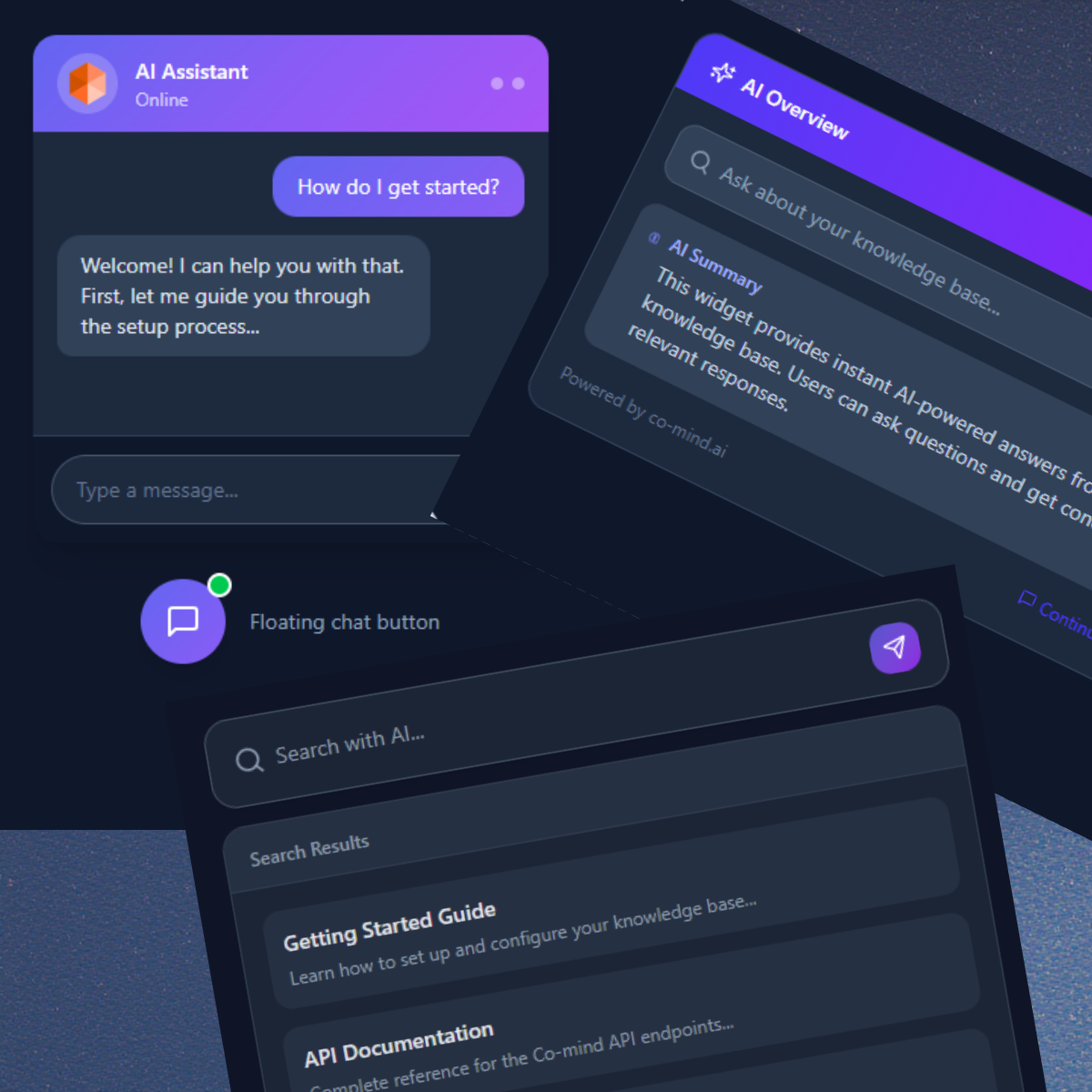

Embeddable Widgets

“Add AI to any website in 5 minutes.”

Drop-in chat widgets for your website, portal, or intranet. Three widget types, six pre-built templates, full branding control, and five language options.

Voice Assistants

ASR, TTS & Transcription.

Two-tier voice processing architecture with CPU and GPU support. Automatic speech recognition, text-to-speech, and real-time transcription — all running privately on your infrastructure.